AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Splunk enterprise dfs12/27/2023 Hadoop divides files into big pieces and distributes them across a cluster of machines. Hadoop's storage component is the Hadoop Distributed File System (HDFS), while its processing component is a Map-Reduce programming approach. It is scalable from a single server to thousands of devices, each providing local computing and storage. Hadoop is a framework for handling "Big Data" in simple terms.

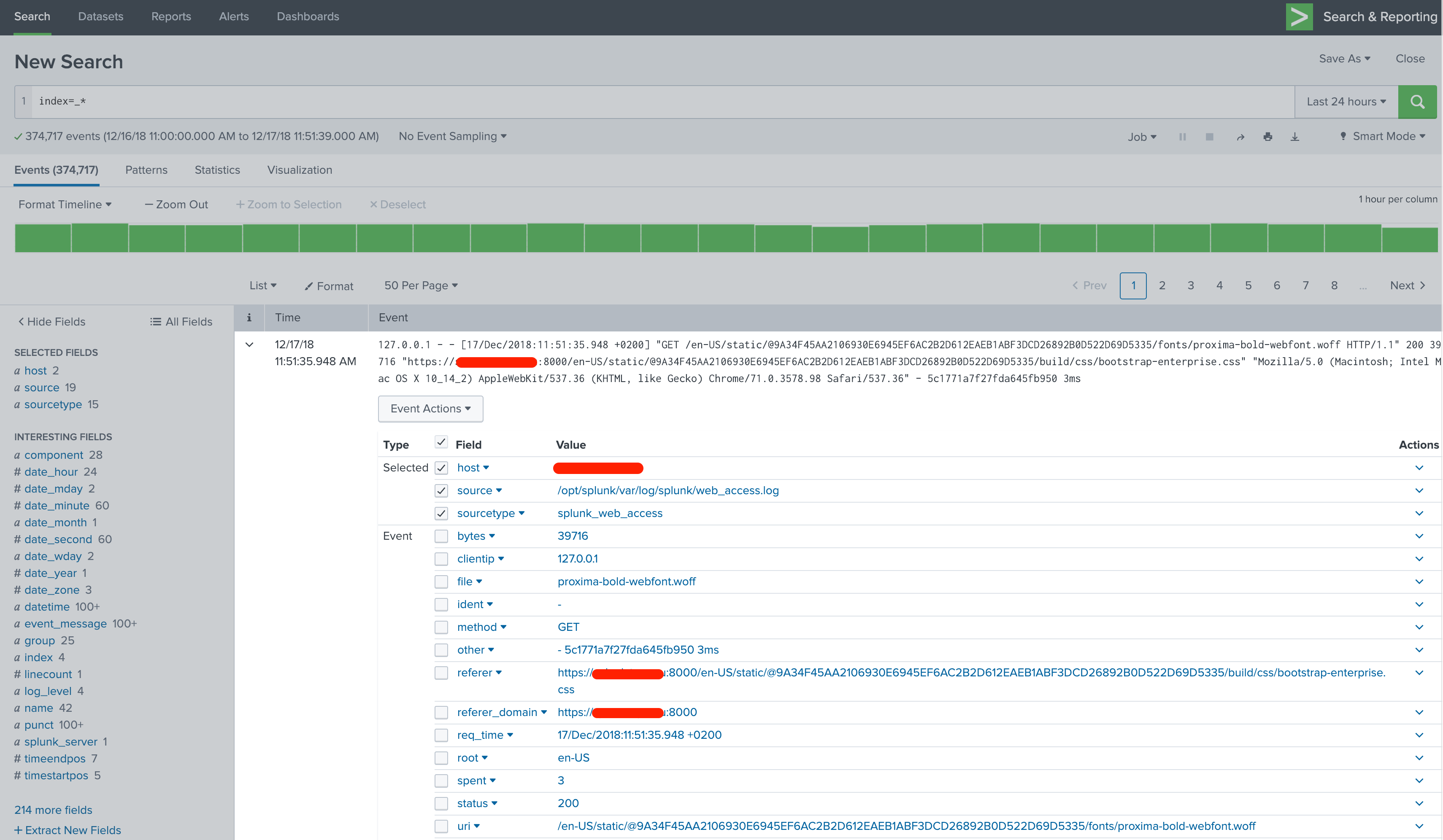

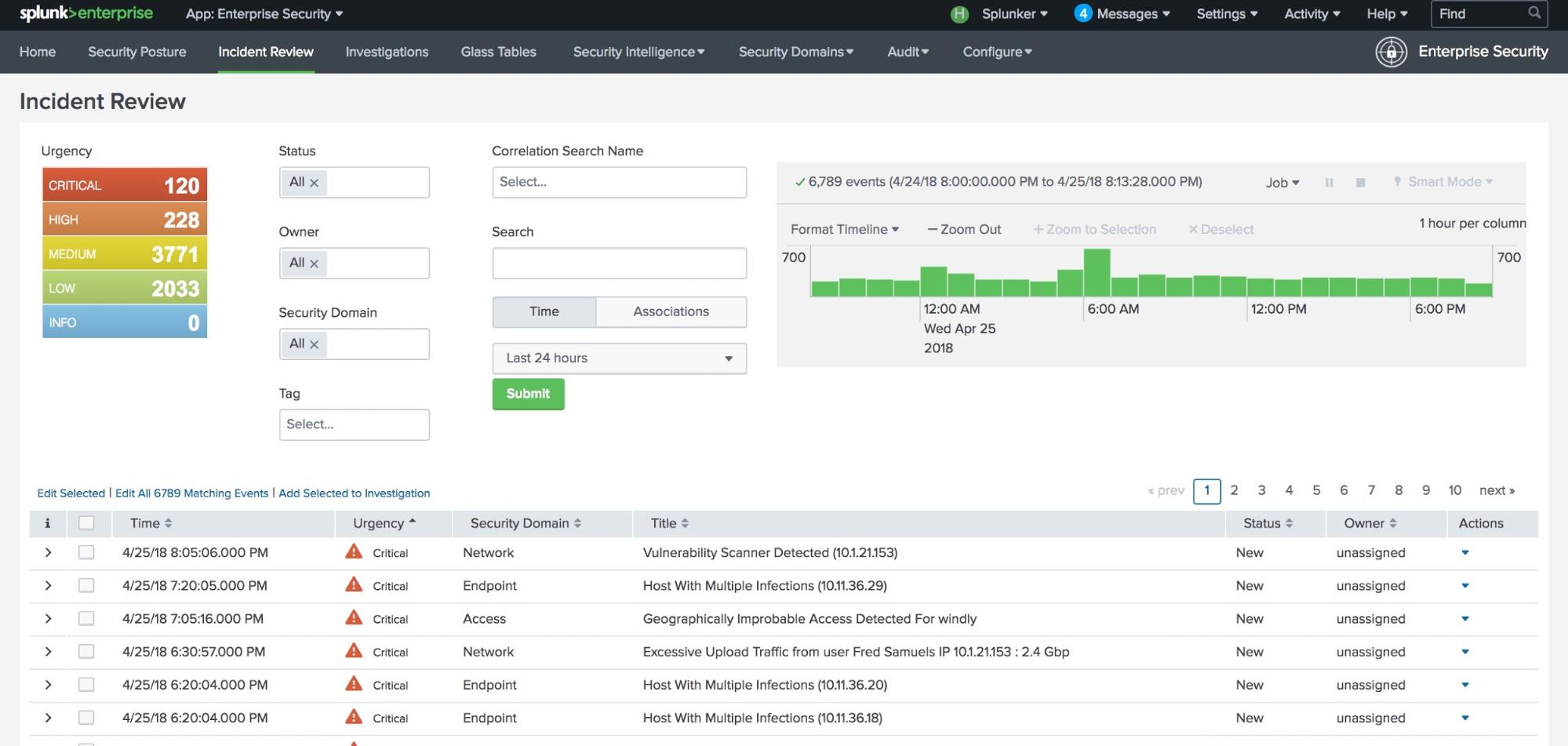

The Apache Hadoop software library is a platform that enables the distributed processing of massive data volumes using basic programming paradigms across clusters of machines. In this article, we will explore Hadoop vs. Splunk provides web-based access to tools for indexing, searching, monitoring, and analyzing machine data. It provides a platform for log analytics, analyses log data, and visualizes the results. Hadoop processes massive amounts of data using a distributed file system and the map-reduce technique. Note: The parameters can be placed in any order.Hadoop is a framework for handling "Big Data" in simpler terms.

In case it was not specified, use default.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed